You can definitely see your expertise in the work you write.

The world hopes for more passionate writers such as you who are not afraid to

say how they believe. Always go after your heart.

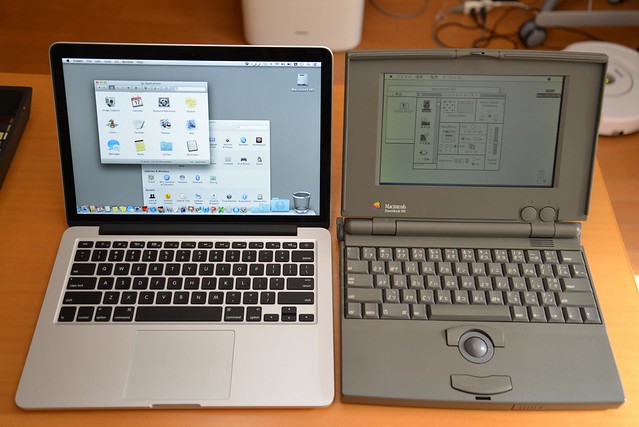

by Flick user raneko

by Flick user raneko

Who would have thought? Me switching to Mac? But it actually happened. I’ve been a long time Linux user, so why actually do the switch now? Or ever? Have a look at my thoughts and insights of novice Mac user.

In the beginning

My humble beginnings were with RedHat 6.0 on a AMD Duron 600Mhz PC machine. With a dialup connection. And setting that thing to connect into Poland’s national provider TPSA was rather painful. After some time using Linux, going from RedHat to Slackware I’ve decided things weren’t tough enough and switched to BSDs. That was really fun! I’ve used Free and Open flavors for a few years which very really great. Than switched back to Linux. I’ve used those *nix systems for all-things-computer. At home and at work.

The switch

And than came the new job and I had to choose: regular Dell or Macbook? Blue pill or red pill? I chose the later.

The switch wasn’t that painful, but mainly thanks to superb hardware Apple offers. Having to get used to a completely new OS was quite another story. It’s not that I was shocked by it. As a long time Linux user all the concepts are known to me. It’s the other way around - there are a lot of things missing that I learned to require from my tool of work.

What I really appreciate is the known userland tools - it’s BSD at the heart of it. Well, of course the kernel is some whacky Mach microkernel, but as for the userland I’m happy :) You can read more about the kernel and system’s design history on wikipedia (Os X, Darwin) XNU)

One of the biggest disappointments was the filesystem and the way it’s presented to users. Mac OS X comes with HFS+ (link) filesystem which is case insensitive!!! To me this seems like an abomination. Plus there are multiple shortcuts taken by Apple engineers, like its endiannes: primarily Macs used PowerPC chips which are BigEndian by desing, but after switching to Intel processors everything is LittleEndian now. AFAIK HFS+ has to still switch bytes when reading metadata.

Really neat thing I’ve found out recently is that under the hood OS X uses PF (OpenBSD’s packet filter) as a firewalling solution. I don’t know which version is in the current release and how does it compare against the original implementation, but since PF has such a nice syntax and performance it’s great to have it on board. There are numerous blog posts about setting a decent firewall on OS X with PF so go have a look. Also you can play with PF by means of a set of apps called Murus.

Useful tools

- Brew - basic application provider, offers all the things I’ve become used to when on Linux,

- Amethyst - allows window tiling with keyboard shortcuts and has focus-follows-mouse :D I love this feature, although with all the windows popping all over the place I must admit it sometimes gets messy.

- MenuMeters - have a bunch of those geeky meters all over the place (no longer usable with El Capitan)

- Alfred - seems like a nice app, Spotlight on steroids. It’s free and you can download it via ITunes

- Flashlight - add more providers to Spotlight - unfortunately with recent introduction of rootless mac partition it’s no longer possible to use this tool

Hacking Spotlight

Spotlight is just that neat little thing out there that seems like indexing all the things and runs installed programs. But it can also serve as a calculator and …

You can also read the contents of its cache file.

Up until version 10.10.4 of OS X it was possible to have additions to spotlight, but now this behaviour is blocked by the system. http://mac-how-to.wonderhowto.com/how-to/customize-spotlight-search-mac-os-x-yosemite-0160786/

Script setup

Setup your mac with this shell script - but be very careful and read through this file first! https://gist.github.com/brandonb927/3195465

by Flickr user blakespot

by Flickr user blakespot

Neat things

And it is quite positive, that Mac OS X developers care about small, but extremely important things - like building OpenSSH with LibreSSL support!

DTrace

Recently I’ve experienced a huge slowdown on my Mac. The It support’s solution was to reinstall the OS. I refused, this seemed like a barbaric method and also I just wanted to use this opportunity to delve deeper into internals of this OS.

I’ve started with analysing system behaviour with DTrace - probing interface originating from Solaris

Useful introductory links are here:

- http://dtrace.org/blogs/brendan/2011/10/10/top-10-dtrace-scripts-for-mac-os-x/

- http://dtrace.org/blogs/brendan/2012/11/14/dtracing-in-anger/

Conclusion

All in all the switch was relatively painless, and with tools and tricks described here I feel very comfortable using this system.

]]>

We stumbled over here from a different web address and thought I might check things out.

I like what I see so i am just following you.

Look forward to checking out your web page yet again.

I like what you guys are up too. This type of clever work and reporting!

Keep up the awesome works guys I’ve added you guys to my own blogroll.

Greetings from Florida! I’m bored at work so I decided to browse your site on my iphone during lunch break. I enjoy the info you present here and can’t wait to take a look

when I get home. I’m surprised at how quick your blog loaded on my cell phone .. I’m not even using WIFI, just 3G .

. Anyways, very good site!

Comfortableness <a href="http://www.salethenorthfacejackets.com">north face jackets</a>

is crucial when they get it that will <a href="http://www.salethenorthfacejackets.com">north face outlet</a> get the best school bags pertaining to going camping <a href="http://www.salethenorthfacejackets.com">north face sale</a>. Your easiest guarantee in the case of even larger delivers has become One with an inner metal framework, one that can wind <a href="http://www.salethenorthfacejackets.com">cheap north face</a> up being aligned to help you appropriately fit your <a href="http://www.salethenorthfacejackets.com/the-north-face-women-1">north face women</a> body. They should be now have http://www.salethenorthfacejackets.com secure which were wholly flexible, because essentially in the form of midsection belt to get more aid.

I never imagined how much stuff there was out there

on this! Thanks for making it easy to get the picture

What Programming Languages Do Jobs Require? | Regular Geek regulargeek.com/2009/07/21/what-programming-languages-do-jobs-require view page cahecd As a software engineer, you need to keep your skills sharp and current. This is a general requirement of the job. In addition to this, in the current economy you do not want to be without a job. Obviously, this means learning more about what your current company uses for all of its development. What if you do not have a job or you are looking to leave? What technologies or programming languages should you be looking into? From the page

Howdy are using Wordpress for your site platform? I’m new to the blog world but I’m trying to

get started and create my own. Do you need any coding expertise to make your own

blog? Any help would be greatly appreciated!